New ARC-AGI-3 benchmark shows that humans still outperform LLMs at pretty basic thinking

ARC-AGI-3 aims to test how well AI systems can handle brand new problems. While people breeze through the challenges, the latest AI models still come up short.<br /> The article New ARC-AGI-3 benchmark shows that humans still outperform LLMs at pretty basic thinking appeared first on THE DECODER. [...]

Rating

Innovation

Pricing

Technology

Usability

We have discovered similar tools to what you are looking for. Check out our suggestions for similar AI tools.

Moonshot's Kimi K2 Thinking emerges as leading open source AI, outperforming GPT-5, Claude Sonnet 4.5 on key benchmarks

Even as concern and skepticism grows over U.S. AI startup OpenAI's buildout strategy and high spending commitments, Chinese open source AI providers are escalating their competition and one has e [...]

Samsung AI researcher's new, open reasoning model TRM outperforms models 10,000X larger — on specific problems

The trend of AI researchers developing new, small open source generative models that outperform far larger, proprietary peers continued this week with yet another staggering advancement.Alexia Jolicoe [...]

ARC-AGI-3 offers $2M to any AI that matches untrained humans, yet every frontier model scores below 1%

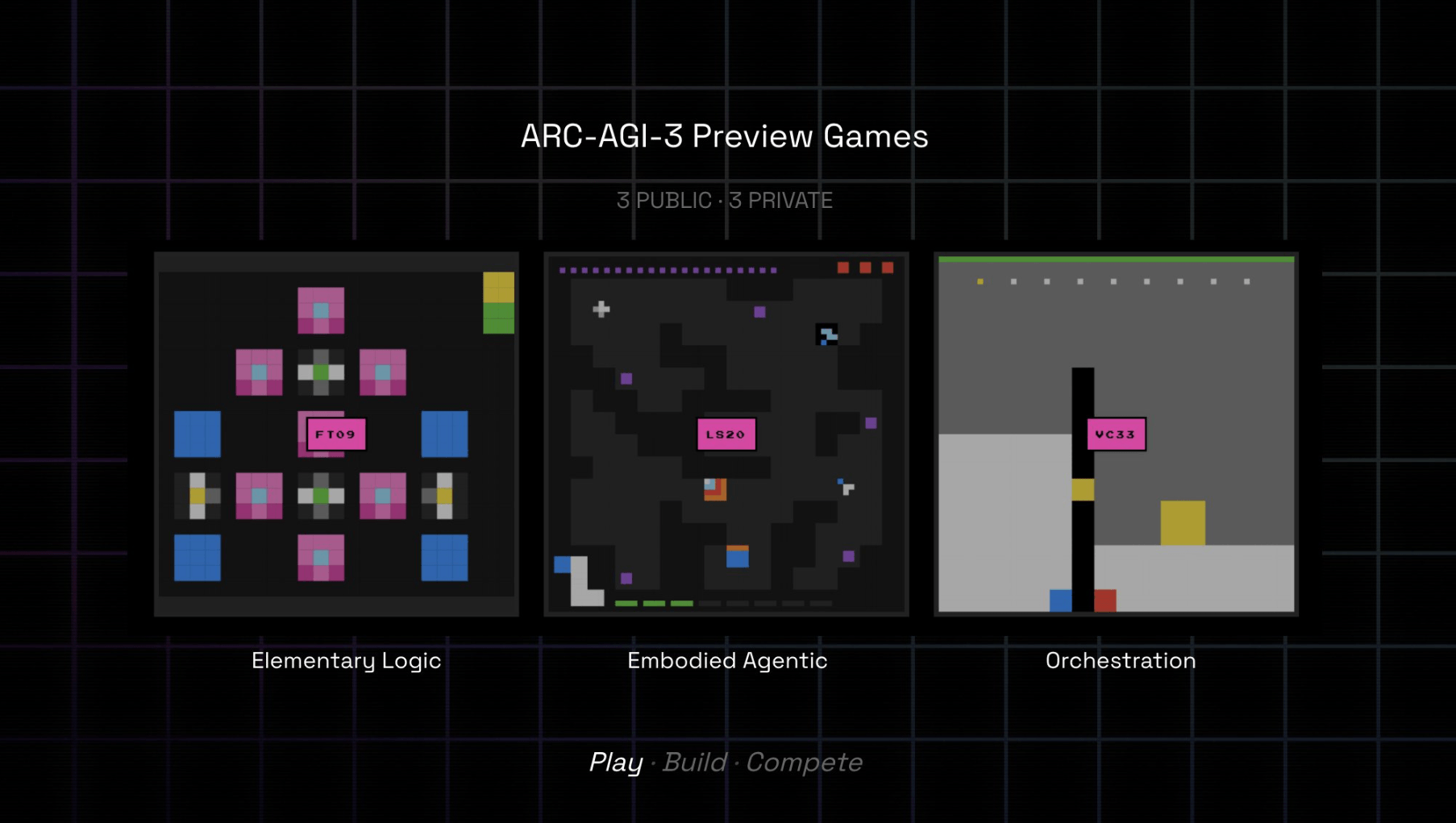

The new ARC-AGI-3 benchmark drops AI systems into interactive game environments that humans solve with ease. No frontier model breaks the 1 percent mark because the benchmark strips away their biggest [...]

Is Anthropic 'nerfing' Claude? Users increasingly report performance degradation as leaders push back

A growing number of developers and AI power users are taking to social media to accuse Anthropic of degrading the performance of Claude Opus 4.6 and Claude Code — intentionally or as an outcome of c [...]

Baidu just dropped an open-source multimodal AI that it claims beats GPT-5 and Gemini

Baidu Inc., China's largest search engine company, released a new artificial intelligence model on Monday that its developers claim outperforms competitors from Google and OpenAI on several visio [...]

Qwen3-Max Thinking beats Gemini 3 Pro and GPT-5.2 on Humanity's Last Exam (with search)

Chinese AI and tech firms continue to impress with their development of cutting-edge, state-of-the-art AI language models.Today, the one drawing eyeballs is Alibaba Cloud's Qwen Team of AI resear [...]

Databricks' OfficeQA uncovers disconnect: AI agents ace abstract tests but stall at 45% on enterprise docs

There is no shortage of AI benchmarks in the market today, with popular options like Humanity's Last Exam (HLE), ARC-AGI-2 and GDPval, among numerous others.AI agents excel at solving abstract ma [...]

Tiny AI model outperforms o3‑mini and Gemini 2.5 Pro in ARC‑AGI benchmark

A new mini-model called TRM shows that recursive reasoning with tiny networks can outperform large language models on tasks like Sudoku and the ARC-AGI test - using only a fraction of the compute powe [...]