Rating

Innovation

Pricing

Technology

Usability

We have discovered similar tools to what you are looking for. Check out our suggestions for similar AI tools.

Self-improving language models are becoming reality with MIT's updated SEAL technique

Researchers at the Massachusetts Institute of Technology (MIT) are gaining renewed attention for developing and open sourcing a technique that allows large language models (LLMs) — like those underp [...]

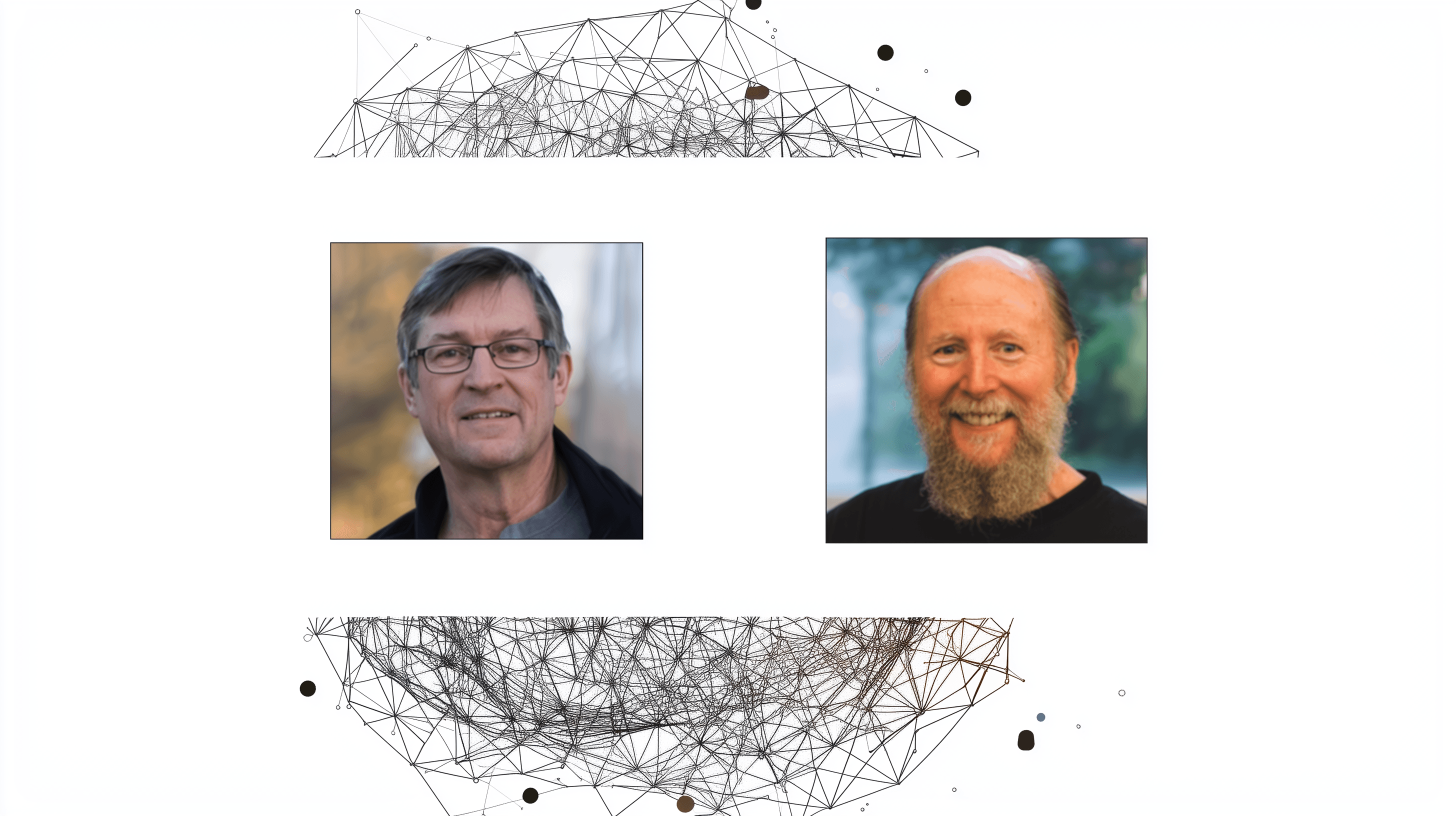

Algorithms from the 1980s power today's AI breakthroughs, earn Turing Award for researchers

Andrew Barto and Richard Sutton have won the 2024 A.M. Turing Award for developing key technologies that power modern artificial intelligence, including recent breakthroughs in large reasoning models. [...]

Nvidia researchers boost LLMs reasoning skills by getting them to 'think' during pre-training

Researchers at Nvidia have developed a new technique that flips the script on how large language models (LLMs) learn to reason. The method, called reinforcement learning pre-training (RLP), integrates [...]

MIT's new fine-tuning method lets LLMs learn new skills without losing old ones

When enterprises fine-tune LLMs for new tasks, they risk breaking everything the models already know. This forces companies to maintain separate models for every skill.Researchers at MIT, the Improbab [...]

Google finds that AI agents learn to cooperate when trained against unpredictable opponents

Training standard AI models against a diverse pool of opponents — rather than building complex hardcoded coordination rules — is enough to produce cooperative multi-agent systems that adapt to eac [...]

Google’s new AI training method helps small models tackle complex reasoning

Researchers at Google Cloud and UCLA have proposed a new reinforcement learning framework that significantly improves the ability of language models to learn very challenging multi-step reasoning task [...]

AI agents fail 63% of the time on complex tasks. Patronus AI says its new 'living' training worlds can fix that.

Patronus AI, the artificial intelligence evaluation startup backed by $20 million from investors including Lightspeed Venture Partners and Datadog, unveiled a new training architecture Tuesday that it [...]

AI pioneers warn OpenAI's corporate overhaul could betray its original mission for humanity

A group of former OpenAI employees, researchers, and nonprofit organizations is urging regulators to block OpenAI’s proposed corporate restructuring, arguing it threatens the company’s founding mi [...]