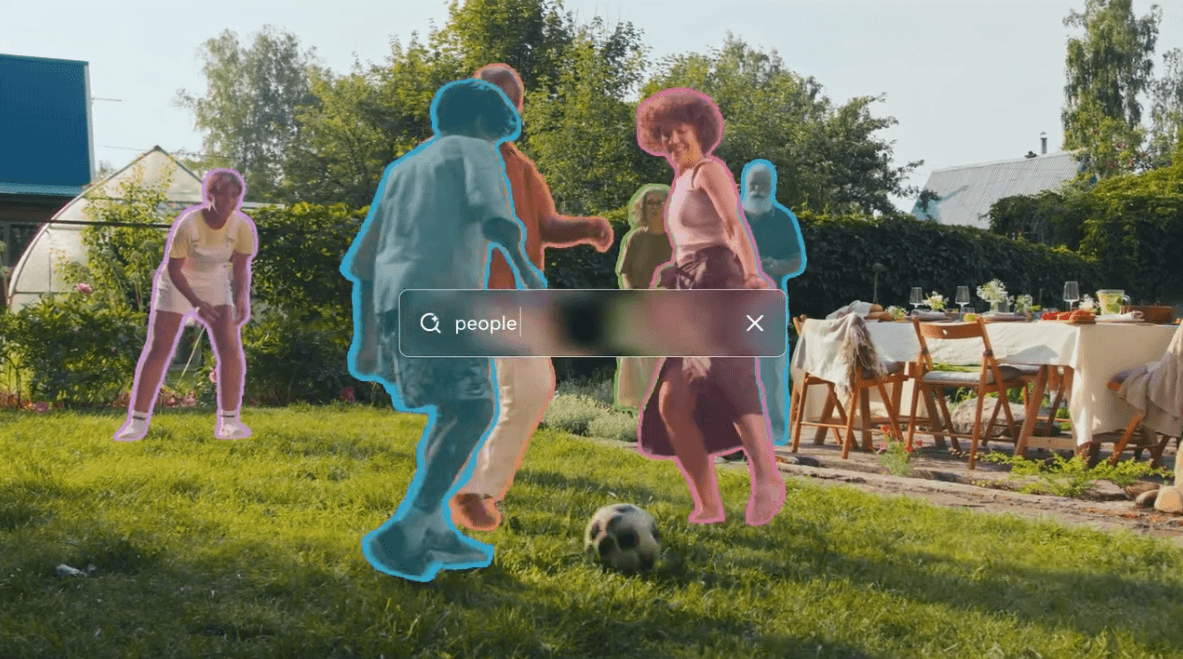

Meta's SAM 3 segmentation model blurs the boundary between language and vision

Meta releases the third generation of its "Segment Anything Model." Unlike standard models limited to fixed categories, SAM 3 uses an open vocabulary to understand both images and videos. The system relies on a new training method combining human and AI annotators.<br /> The article Meta's SAM 3 segmentation model blurs the boundary between language and vision appeared first on THE DECODER. [...]

Rating

Innovation

Pricing

Technology

Usability

We have discovered similar tools to what you are looking for. Check out our suggestions for similar AI tools.

How an Oregon court became the stage for a $115,000 showdown between Meta and Facebook creators

Some of the most successful creators on Facebook aren't names you'd ever recognize. In fact, many of their pages don't have a face or recognizable persona attached. Instead, they run pa [...]

Microsoft built Phi-4-reasoning-vision-15B to know when to think — and when thinking is a waste of time

Microsoft on Tuesday released Phi-4-reasoning-vision-15B, a compact open-weight multimodal AI model that the company says matches or exceeds the performance of systems many times its size — while co [...]

Goodbye, Llama? Meta launches new proprietary AI model Muse Spark — first since Superintelligence Labs' formation

Meta has been one of the most interesting companies of the generative AI era — initially gaining a loyal and huge following of users for the release of its mostly open source Llama family of large l [...]

Everything Meta announced at Connect 2025: Second-gen Ray-Ban Meta, Oakley Meta Vanguard and Meta Ray-Ban Display

At Meta Connect 2025's kickoff event, Mark Zuckerberg unveiled a trio of new smart eyewear, including its first model with augmented reality. Meta's boss also announced the second generation [...]