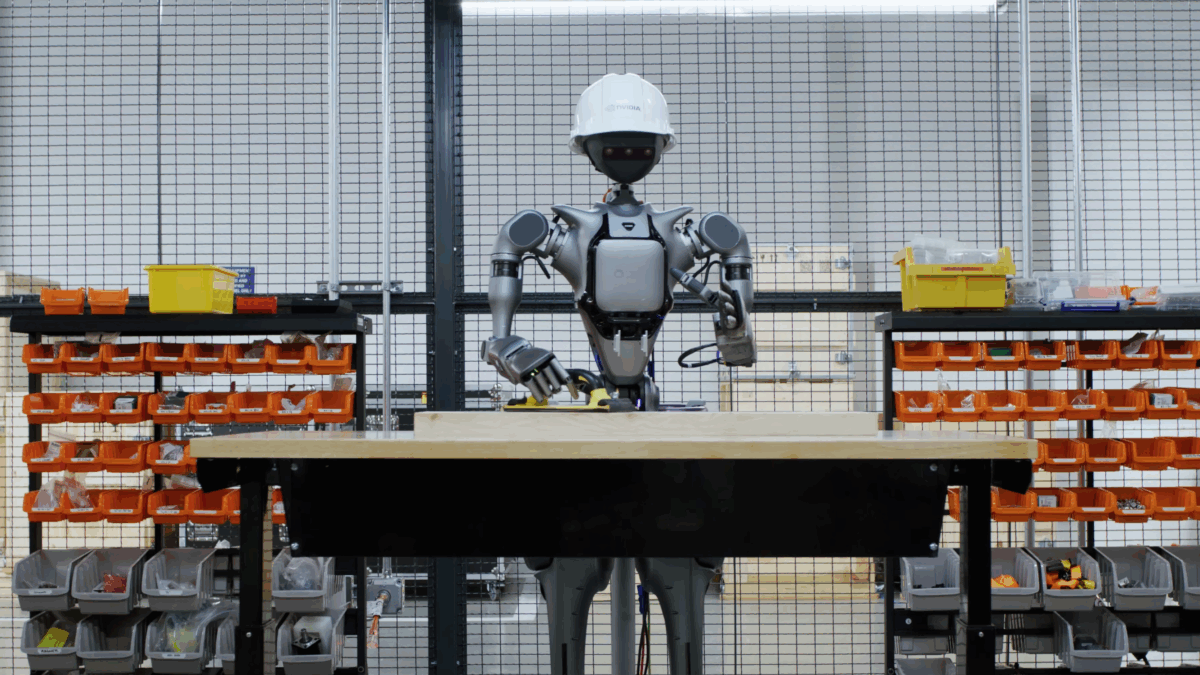

AI models fail at robot control without human-designed building blocks but agentic scaffolding closes the gap

A new framework from Nvidia, UC Berkeley, and Stanford systematically tests how well AI models can control robots through code. The findings: without human-designed abstractions, even top models fail, but methods like targeted test-time compute scaling closes the gap.<br /> The article AI models fail at robot control without human-designed building blocks but agentic scaffolding closes the gap appeared first on The Decoder. [...]

Rating

Innovation

Pricing

Technology

Usability

We have discovered similar tools to what you are looking for. Check out our suggestions for similar AI tools.

Nvidia's agentic AI stack is the first major platform to ship with security at launch, but governance gaps remain

For the first time on a major AI platform release, security shipped at launch — not bolted on 18 months later. At Nvidia GTC this week, five security vendors announced protection for Nvidia's a [...]

Most enterprises can't stop stage-three AI agent threats, VentureBeat survey finds

A rogue AI agent at Meta passed every identity check and still exposed sensitive data to unauthorized employees in March. Two weeks later, Mercor, a $10 billion AI startup, confirmed a supply-chain br [...]

Upwork study shows AI agents excel with human partners but fail independently

Artificial intelligence agents powered by the world's most advanced language models routinely fail to complete even straightforward professional tasks on their own, according to groundbreaking re [...]

The robots we saw at CES 2026: The lovable, the creepy and the utterly confusing

CES always has its share of attention-grabbing robots. But this year in particular seemed to be a landmark year for robotics. The advancement in AI technology has not only given robots better “brain [...]

Agentic AI security breaches are coming: 7 ways to make sure it's not your firm

AI agents – task-specific models designed to operate autonomously or semi-autonomously given instructions — are being widely implemented across enterprises (up to 79% of all surveyed for a PwC rep [...]